|

10/29/2019 Vhdl Binary To Integer Converter Word

Contents. Algorithm The algorithm operates as follows: Suppose the original number to be converted is stored in a that is n bits wide. Reserve a scratch space wide enough to hold both the original number and its BCD representation; n + 4× ceil( n/3) bits will be enough. It takes a maximum of 4 bits in binary to store each decimal digit. Then partition the scratch space into BCD digits (on the left) and the original register (on the right).

For example, if the original number to be converted is eight bits wide, the scratch space would be partitioned as follows: 100s Tens Ones Original 0010 0100 001 The diagram above shows the binary representation of 243 10 in the original register, and the BCD representation of 243 on the left. The scratch space is initialized to all zeros, and then the value to be converted is copied into the 'original register' space on the right. 0000 0000 001 The algorithm then iterates n times.

On each iteration, the entire scratch space is left-shifted one bit. However, before the left-shift is done, any BCD digit which is greater than 4 is incremented by 3. The increment ensures that a value of 5, incremented and left-shifted, becomes 16, thus correctly 'carrying' into the next BCD digit. The double-dabble algorithm, performed on the value 243 10, looks like this: 0000 0000 001 Initialization 0000 0000 000 Shift 0000 0000 000 Shift 0000 0000 010 Shift 0000 0000 100 Add 3 to ONES, since it was 7 0000 0001 010 Shift 0000 0001 100 Add 3 to ONES, since it was 5 0000 0011 000 Shift 0000 0110 000 Shift 0000 1001 000 Add 3 to TENS, since it was 6 0001 0010 000 Shift 0010 0100 000 Shift 2 4 3 BCD Now eight shifts have been performed, so the algorithm terminates. The BCD digits to the left of the 'original register' space display the BCD encoding of the original value 243. Another example for the double dabble algorithm – value 65244 10.

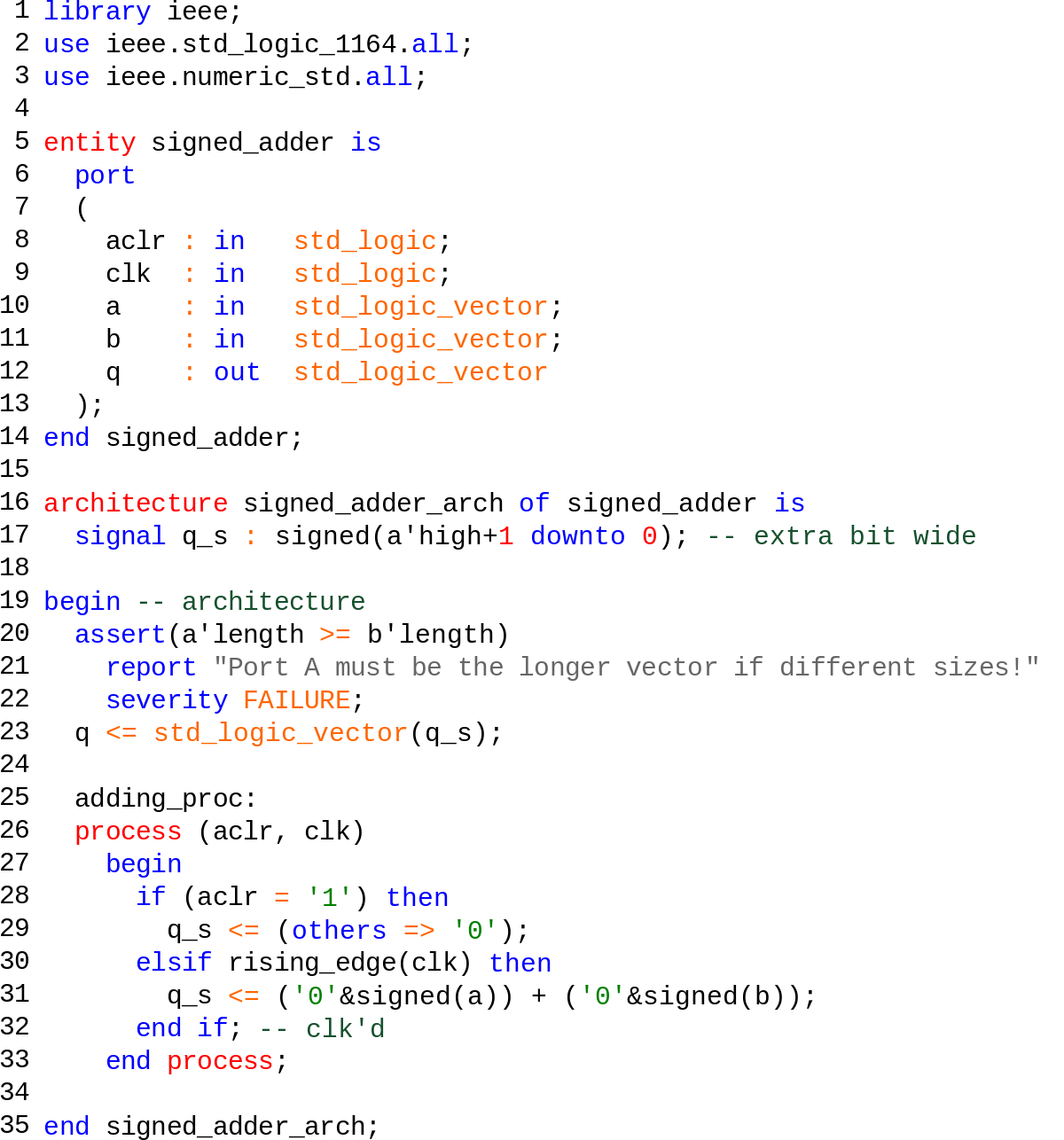

Library IEEE; use IEEE.STDLOGIC1164. ALL; use IEEE.numericstd. LIBRARY ieee; USE ieee.stdlogic1164.

/SBA: Program Details - - Snippet: 16 bit Binary to BCD converter - Author: Miguel A. Risco-Castillo - Description: 16 bit to BCD converter using 'Double Dabble' algorithm. Before call you must fill 'binin' with the appropriate value, after called, - the snippet put into the variable 'bcdout' the BCD result of the conversion. Put the snippet in the routines section of the user program.

This is really getting nowhere. Do you know ANYTHING about USB or FPGA at all? First of all, Using USB to communicate between an FPGA and a PC is like trying to fly a 747 in order to go to your friends home next door. USB is complicated, difficult and complex protocol and in NO WAY it is communicating in ASCII, unless you have a special device/program that does this for you. If you use a serial port, then you can use a simple terminal program on the PC and a simple rs232 IP on the FPGA to make the communication, then you can write a simple code to convert your binary data to HEX values in ASCII, this should be simple enough to do. The easiest way to do 'byte' to 'bcd expansion' is a simple case statement with all 255 options explicitlyy defined.

The operation is then a basic boolean 8-bit lut function per each output bit NOTE: you will need to specify a 'no rom extract' synthesis attribute to stop quartus or xst inferring a ROM block. The following will reduce to a 31 LUT implementation in the FPGA a V5,S6,V6,Arria or Stratix etc. Case (inbyte) is when x'00' = val val val. I will refraze my question. I need function in vhdl or Verilog vith: RegisterIN: IN stdlogicvector (7 downto0); Numb1: OUT stdlogicvector(3 downto 0); Numb2: OUT stdlogicvector(3 downto 0); Numb3: OUT stdlogicvector(3 downto 0); IF RegisterIN is 10011001 -153 in Dec, Numb1 must be 0001 -as 1 in dec Numb2 must be 0101 -as 5 in dec Numb3 must be 0011 -as 3 in dec This is IT!!!! I have done something similar in a function for avr to convert a long unsigned integer to a string. You make subtractions instead of division, you make a loop to subtract 100 from the input number and after each subtraction you increase the first digit of the output by 1 until the number is lower than 100 then you do the same using 10 (increasing the second output digit) and 1 (increasing the third output digit) until the number is 0.

234 - 100 output 100 (Numb1=1, Numb2=0, Numb3=0) 134 - 100 output 200 034 is lower than 100 034 - 10 output 210 024 - 10 output 220 014 - 10 output 230 004 is lower than 10 004 - 1 output 231 003 - 1 output 232 002 - 1 output 233 001 - 1 output 234 (Numb1=2, Numb2=3, Numb3=4) 000 stop i don't know if this takes many resources in VHDL because i haven't tried it but is can be done.

VHDL Predefined Types VHDL Predefined Types from the package standard The type and subtype names below are automatically defined. They are not technically reserved words but save yourself a lot of grief and do not re-define them. Note that enumeration literals such as 'true' and 'false' are not technically reserver words and can be easily overloaded, but save future readers of your code the confusion. It is confusing enough that '0' and '1' are enumeration literals of both type Character and type Bit.

'01101001' is of type string, bitvector, stdlogicvector and more. There is no automatic type conversion in VHDL, yet users and libraries may provide almost any type conversion. For numeric types integer(X) yields the rounded value of the real variable X as an integer, real(I) yields the value of the integer variable I as a real. Notes: Reserver words are in bold type, Type names are alphabetical and begin with an initial uppercase letter.

Enumeration literals are in plain lower case.

Hello, I am using FFT v3.2 core from Xilinx. I have Xilinx ISE/Model Sim. The outputs of the core are xkre and xnre.

1) I am expanding Xilinx-provided test bench file. I am trying to write the outputs to.out file. Below are the lines of the code I'm using for that.

If (done='1' and busy='1') then i1 xnre, xnim = xnim, start = start, nfft = nfft, nfftwe = nfftwe, fwdinv = fwdinv, fwdinvwe = fwdinvwe, scalesch = scalesch, scaleschwe = scaleschwe, ce = ce, clk = clk, rst = rst, xkre = xkre, xkim = xkim, xnindex = xnindex, xkindex = xkindex, rfd = rfd, busy = busy, dv = dv, edone = edone, done = done, ovflo = ovflo, locked = locked ); - Clock clockproc: process begin wait for HALFCLOCKPERIOD; clk. The writing is working now (I shrinked the input file too much, so there was nothing to be processed.

Still have question about converting two's complement to integer. Vitaliy Vitaliy wrote: HelloI am using FFT v3.2 core from Xilinx.

I have Xilinx ISE/Model Sim. The outputs of the core are xkre and xnre. 1) I am expanding Xilinx-provided test bench file. I am trying to write the outputs to.out file. Below are the lines of the code I'm using for that. if (done='1' and busy='1') then i1 end if; while (busy='1' and i1=1) loop write(myline, xkre); writeline(myoutput, myline); wait until clk='1'; end loop; Basically, I want to write new value each clock cycle.

However, the above procedure prevents the output produced (meaning xkre and xnre stay at 0, however xkindex does incrementat each clock cycle after done bit is issued by the core. I have also tried the following just to see if writing anything (simple counter in this case) during the time the data is supposed to be produced will prevent the data to be output. And, inded, the data was not produce (xkim and xkre remained at zero).

if (done='1' and busy='1') then i1 end if; while (busy='1' and i1=1) loop write(myline, i2); writeline(myoutput, myline); i2 wait until clk='1'; end loop; Any suggestions? 2) The output of the core is two's complement. Is there a standard procedure in VHDL to transform the data from two's complement to integer? ThanksVitaliy Ryerson University - - Input 9.375 MHz (period - 106.67ns) signal.

'Vitaliy' wrote in message news.6cwn.googlegroups.com. The writing is working now (I shrinked the input file too much, so there was nothing to be processed. Still have question about converting two's complement to integer. 2) The output of the core is two's complement. Is there a standard procedure in VHDL to transform the data from two's complement to integer?

To convert a stdlogicvector (ex. Myslv) that is being interpreted as 'twos complement' to an integer use. Tointeger(signed(Myslv)) KJ. I'm getting this error in ModelSim: #. Error: designtoptb.vhd(165): (vcom-1137) Identifier 'signed' is not visible.

Adobe Photoshop 7.0.1 Update, free and safe download. Adobe Photoshop 7.0.1 Update latest version: Essential update for Adobe Photoshop 7.0.1. Photoshop has been the industry leading image editing suite for years. If you're interested in ch. Adobe imageready 7.0.1 free download 1 free download for pc. Adobe Photoshop CS2, free and safe download. Adobe Photoshop CS2 latest version: The heavyweight of graphic editors. This program can no longer be downloaded. Take a look at Adobe Photoshop CC instead. Size: 4.67MB License: Freeware Price: Free By: PC Manager Studio Adobe Photoshop 7.0 Update 7.0.1. Adobe Photoshop Update - update your Photoshop 7.0 to Photoshop 7.0.1. The most significant fixes in the 7.0.1 release include the following: -Photoshop no. Download now. Size: 12.82MB License: Freeware Price:.

Making two objects with the name 'signed' directly visible via use clauses results in a conflict; neither object is made directly visible. (LRM Section 10.4) Vitaliy KJ wrote: 'Vitaliy' wrote in message news.6cwn.googlegroups.com. The writing is working now (I shrinked the input file too much, so there was nothing to be processed. Still have question about converting two's complement to integer. 2) The output of the core is two's complement.

Is there a standard procedure in VHDL to transform the data from two's complement to integer? To convert a stdlogicvector (ex. Myslv) that is being interpreted as 'twos complement' to an integer use. tointeger(signed(Myslv)) KJ. In tointeger(signed(Myslv)), does signed relate to integer or to arithmetic (I think integer, but just checking)?

Because there are two libraries: ieee.numericstd.signed and ieee.stdlogicarith.signed So, when I specify the complete name of the library (i.e ieee.numericstd.signed), the compiler is happy. Vitaliy KJ wrote: 'Vitaliy' wrote in message news.6cwn.googlegroups.com. The writing is working now (I shrinked the input file too much, so there was nothing to be processed. Still have question about converting two's complement to integer.

2) The output of the core is two's complement. Is there a standard procedure in VHDL to transform the data from two's complement to integer? To convert a stdlogicvector (ex. Myslv) that is being interpreted as 'twos complement' to an integer use. tointeger(signed(Myslv)) KJ. Vitaliy wrote: In tointeger(signed(Myslv)), does signed relate to integer or to arithmetic (I think integer, but just checking)?

Because there are two libraries: ieee.numericstd.signed and ieee.stdlogicarith.signed So, when I specify the complete name of the library (i.e ieee.numericstd.signed), the compiler is happy. Vitaliy KJ wrote: 'Vitaliy' wrote in message news.6cwn.googlegroups.com. The writing is working now (I shrinked the input file too much, so there was nothing to be processed. Still have question about converting two's complement to integer.

2) The output of the core is two's complement. Is there a standard procedure in VHDL to transform the data from two's complement to integer?

To convert a stdlogicvector (ex. Myslv) that is being interpreted as 'twos complement' to an integer use. tointeger(signed(Myslv)) KJ Don't use both numericstd and stdlogicarith. They have different definitions for the same function names. If you do use both, then you have to specify which one each library call belongs to.

'Vitaliy' wrote in message [email protected]. In tointeger(signed(Myslv)), does signed relate to integer or to arithmetic (I think integer, but just checking)? 'signed' relates to how the stdlogicvector is supposed to be interpreted. All by itself stdlogicvectors have no implicit 'sign' bit or any sort of numerical interpretation so, for example, '10000000' could mean either 128 (decimal) or a negative number or just a collection of 8 bits of 'stuff'.

Signed('10000000') means that the bit on the left is to be interpreted as a sign bit and the vector is a twos complement representation of a number, which means that in this case we're talking about a negative number, 8 bit numbers can represent anything from -128 to +127. There is also the function unsigned which says that there is no sign bit in the stdlogicvector argument so unsigned('10000000') is a positive number, in this case 128. If you're only dealing with things that cannot be negative there is no value in the 'sign' bit, 8 bit numbers can represent anything from 0 to 255.

To convert the stdlogicvector to an integer via the tointeger function you need to supply it with an argument that has a specific interpretation which is what the signed and unsigned functions provide. Because there are two libraries: ieee.numericstd.signed and ieee.stdlogicarith.signed Don't use stdlogicarith, it has problems and it is not a standard. So, when I specify the complete name of the library (i.e ieee.numericstd.signed), the compiler is happy. Since both libraries have a 'signed' function and the compiler can't tell the difference between the two of them by their usage, specifying the full path name to the function that you want is the work around. Sometimes this is handy but in this particular instance you'd be better off getting rid of stdlogicarith.

By the way, since the title of the thread is 'Writing output signals to text file (VHDL)' I'm guessing that you actually want to write out this integer as text in which case you'll probably be needing to convert that integer to a text string in order to write it to a text file. This can be done with integer'image(Myinteger) or combining with the conversion of the stdlogicvector to an integer. Integer'image(tointeger(signed(Myslv)) KJ. Thanks, I realized from the error that each library has signed function and that confuses the compiler, but didn't know stdlogicarith is not a standard and I have to use numericstd. When would one want to use stdlogicarith library over numericstd?

Integer'image returns the textual representation of 'int', but what is wrong with simply writing 'int'? Or I guess I should ask what the difference between two is? (Is output of 'int' type integer and output of 'integer'image' type char (or is it string of integers?)?) Vitaliy KJ wrote: 'Vitaliy' wrote in message [email protected]. In tointeger(signed(Myslv)), does signed relate to integer or to arithmetic (I think integer, but just checking)?

'signed' relates to how the stdlogicvector is supposed to be interpreted. All by itself stdlogicvectors have no implicit 'sign' bit or any sort of numerical interpretation so, for example, '10000000' could mean either 128 (decimal) or a negative number or just a collection of 8 bits of 'stuff'.

signed('10000000') means that the bit on the left is to be interpreted as a sign bit and the vector is a twos complement representation of a numberwhich means that in this case we're talking about a negative number, 8 bit numbers can represent anything from -128 to +127. There is also the function unsigned which says that there is no sign bit in the stdlogicvector argument so unsigned('10000000') is a positive number, in this case 128. If you're only dealing with things that cannot be negative there is no value in the 'sign' bit, 8 bit numbers can represent anything from 0 to 255.

To convert the stdlogicvector to an integer via the tointeger function you need to supply it with an argument that has a specific interpretation which is what the signed and unsigned functions provide. Because there are two libraries: ieee.numericstd.signed and ieee.stdlogicarith.signed Don't use stdlogicarith, it has problems and it is not a standard.

So, when I specify the complete name of the library (i.e ieee.numericstd.signed), the compiler is happy. Since both libraries have a 'signed' function and the compiler can't tell the difference between the two of them by their usage, specifying the full path name to the function that you want is the work around. Sometimes this is handy but in this particular instance you'd be better off getting rid of stdlogicarith. By the way, since the title of the thread is 'Writing output signals to text file (VHDL)' I'm guessing that you actually want to write out this integer as text in which case you'll probably be needing to convert that integer to a text string in order to write it to a text file.

This can be done with integer'image(Myinteger) or combining with the conversion of the stdlogicvector to an integer. integer'image(tointeger(signed(Myslv)) KJ. Vitaliy wrote: ThanksWhen would one want to use stdlogicarith library over numericstd?

'Never' would be the short answer to when you should use stdlogicarith when writing new code. If instead you're maintaining and supporting existing code that somebody else wrote and they used stdlogicarith then in order to try to avoid introducing new bugs caused by subtle differences between the two libraries you might want to continue to have this legacy code use stdlogicarith if you're making only otherwise minor changes. integer'image returns the textual representation of 'int', but what is wrong with simply writing 'int'? Or I guess I should ask what the difference between two is? (Is output of 'int' type integer and output of 'integer'image' type char (or is it string of integers?)?) I'm not sure what exactly you mean here or exactly what file format you're really trying to write. Try having the simulation write out the file and see what you get. If the file comes out in the format that you want, then you're done.

integer'image returns the textual representation of 'int', but what is wrong with simply writing 'int'? Or I guess I should ask what the difference between two is? (Is output of 'int' type integer and output of 'integer'image' type char (or is it string of integers?)?) I'm not sure what exactly you mean here or exactly what file format you're really trying to write. Try having the simulation write out the file and see what you get. If the file comes out in the format that you want, then you're done. KJ With VHDL you can write binary files. This is the default.

If you write to a binary file, this will be in a machine-specific binary format that will be difficult for a human to read, even with a hex-capable file editor. If you need a file that humans can read, use text files via STD.TEXTIO package procedures, as advised above.

As others said, use ieee.numericstd, never ieee.stdlogicunsigned, which is not really an IEEE package. However, if you are using tools with VHDL 2008 support, you can use the new package ieee.numericstdunsigned, which essentially makes stdlogicvector behave like unsigned. Also, since I didn't see it stated explicitly, here's actual code example to convert from an (unsigned) integer to an stdlogicvector: use ieee.numericstd.all. As LoneTech says, use ieee.numericstd is your friend. You can convert a stdlogicvector to an integer, but you'll have to cast it as signed or unsigned first (as the compiler has no idea which you mean). VHDL is a strongly typed language. I've on this subject Fundamentally, I'd change your 7seg converter to take in an integer (or actually a natural, given that it's only going to deal with positive numbers) - the conversion is then a simple array lookup.

Vhdl Binary To Integer Converter Word 2013

Set up a constant array with the conversions in and just index into it with the integer you use on the entity as an input. Let's say that your 4-bit counter had an INTEGER output SOMEINTEGER, and you wanted to convert it to a 4-bit STDLOGICVECTOR SOMEVECTOR '0'); end if; EDIT: You shouldn't need to declare the variable as an Integer. Try changing the declaration to stdlogicvector instead.

The + and - operators work on stdlogicvectors. As the main answer says, the recommended method is as follows: use ieee.numericstd.all.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed